To delete the Filebeat registry file For example, run: Until Logstash starts with an active Beats plugin, there won’t be any answer on that port, so any messages you see regarding failure to connect on that port are normal for now. Filebeat, Metricbeat and Kibana), as well as download a Docker image for an.

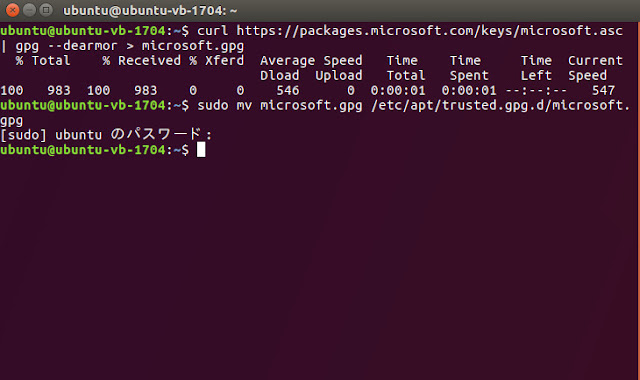

filebeat -e -c filebeat.yml -d "publish"įilebeat will attempt to connect on port 5044. Before going on, download the required VNF and NS packages from this URL. filebeat -e -c filebeat.yml -d "publish" & elasticsearch kibana logstash gelf filebeat logging travis metricbeat jenkins-container docker-swarm-cluster log-aggregation. Ship application logs and metrics using beats & GELF plugin to Elasticsearch. filebeat -e -c filebeat.yml -d "publish" Deploy Elastic stack in a Docker Swarm cluster. Make sure paths points to the example Apache log file, logstash-tutorial.log, that you downloaded earlier: Open the filebeat.yml file located in your Filebeat installation directory, and replace the contents with the following lines. If output.elasticsearch is enabled, the UUID is derived from the Elasticsearch cluster. monitoring.enabled: false Sets the UUID of the Elasticsearch cluster under which monitoring data for this Filebeat instance will appear in the Stack Monitoring UI.

3 years ago nfd testing/filebeat: upgrade to 5.1.2 and add service script. Set to true to enable the monitoring reporter. Step 3 – Configure a filebeat.yml with a some log file Download this directory APKBUILD testing/filebeat: upgrade to 7.4.2. To get started, go here to download the sample data set used in this example. Filebeat has a light resource footprint on the host machine, and the Beats input plugin minimizes the resource demands on the Logstash instance. Filebeat is designed for reliability and low latency.

Also for what it's worth, I'm not doing this update across all my filebeat servers, just a single for testing, and once I establish working pipelines then I'll migrate the change across the board.Filebeat client is a lightweight, resource-friendly tool that collects logs from files on the server and forwards these logs to your Logstash instance for processing. I updated filebeat.yml and no changes in behavior. The only thing I haven't done is restarted the cluster, but according to the documentation pipelines are updated without having to restart services.īelow is the unformatted json. I'm not seeing anything anywhere in the logs on either the filebeat side, nor on the elasticsearch side. I've ran filebeat.exe with -configtest and -e flags and no issues were reported. Please keep the old releases published in order to allow a smooth. This is a problem because chef cookbooks and other scripts include version constraints. In kibana I still see the whole log in the 'message:' field. Filebeat 5.1.2 apt repo Issue 3385 elastic/beats GitHub Due to the release of Filebeat 5.1.2 the old release was deleted from the apt repo. I don't, however, get a properly parsed 'message:' field through the normal log pushes. Make sure paths points to the example Apache log file, logstash-tutorial. Through curl with the ?pipeline=filebeat_pipeline at the end of the request, and pull back the data I get a properly parsed 'message:' field. Step 3 Configure a filebeat.yml with a some log file. Description Filebeat is a lightweight, open source shipper for log file data. This is my filebeat.yml file (relevant lines) output: Install Upgrade Uninstall To install filebeat, run the following command from the command line or from PowerShell: > Package Approved This package was approved by moderator flcdrg on. I'm parsing IIS logs and am unable to get the 'message' field to parse in Elasticsearch.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed